Modern cybersecurity is no longer just about firewalls and antivirus tools. It’s about tracking what happens after data is stolen. The dark web has become a thriving marketplace for leaked credentials, session cookies, and compromised digital identities, putting businesses at constant risk.

This is where Dark Web Monitoring plays a critical role. What was once a niche security practice is now a core part of proactive risk management for organizations of all sizes.

Dark Web Monitoring API enables businesses to automatically scan hidden marketplaces, forums, and infostealer logs for stolen credentials linked to their users, domains, or employees, all in real time and at scale.

The risk is massive. Security researchers estimate that nearly 1.8 billion credentials were harvested by infostealers within just six months, often bundled with session cookies and browser data that allow attackers to bypass multi-factor authentication entirely.

Since manual detection is no longer feasible, Dark Web Monitoring APIs provide an automated way to identify exposure early, trigger rapid remediation, and reduce the impact of account takeovers before real damage occurs.

The Architecture of the Hidden Web

In order to comprehend the requirements of these APIs, it is essential to thoroughly understand how the technical architecture of the dark web itself functions.

For organizations and professionals seeking a foundational understanding of this hidden ecosystem, its history, its access methods, and the distinction between the deep web and dark web, our comprehensive explainer on the dark web serves as an essential starting point before diving into the complexities of automated monitoring.

The dark web operates as an overlay network focused on anonymity and censorship resistance, making it very different from the surface web, which search engines index and users access through standard protocols.

There are many different types of overlay networks; therefore, they require the development of unique monitoring strategies to account for those differences in operation.

| Network protocol | Technical mechanism | Tactical essence for surveillance |

|---|---|---|

| TOR | Multi-layered encryption through random relays (.onion domains) | The main host for ransomware leak websites, hacker forums, and high-volume marketplaces. |

| I2P | Garlic routing through unidirectional network tunnels | Renowned for encrypted communication and fail-proof p2p hidden communities. |

| ZeroNet | Distributed p2p network through BitTorrent and Bitcoin cryptocurrency. | Used for hosting “uncensorable” data repositories and distributed criminal content. |

| Freenet | Decentralized data store with adaptive routing | Leveraged for long-term storage of sensitive leaked datasets and extremist communications. |

| Telegram / Discord | Encrypted and semi-private messaging channels | The “frontier” where rapid data dumps and attack coordination happen in real-time. |

In response to increased law enforcement pressure, there is an overall transition to decentralization in 2026.

TOR remains the most well-known service, while the rise of ZeroNet and I2P shows that malicious actors are abandoning centralized platforms to keep their marketplaces and forums running despite law enforcement scrutiny.

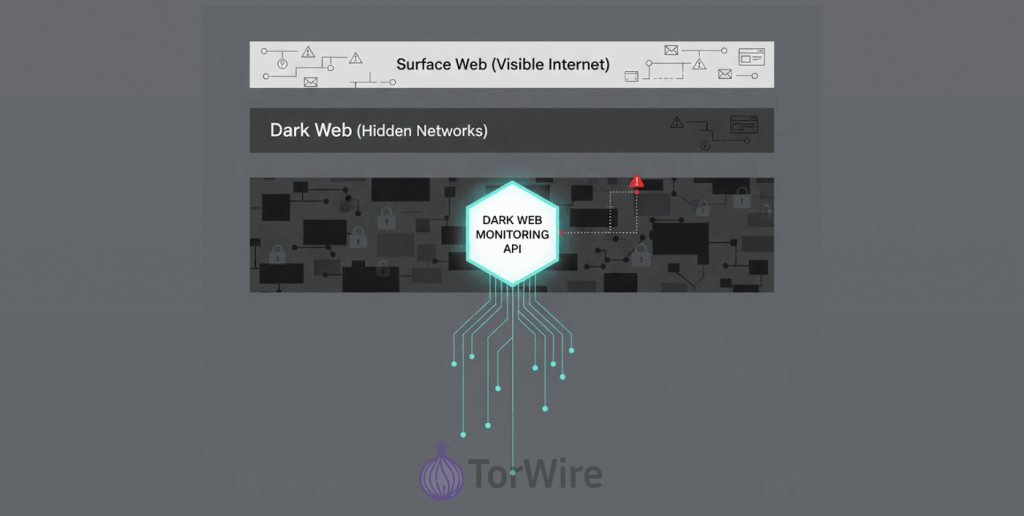

Defining the Dark Web Monitoring API

A Dark Web Monitoring API provides programs with structured access to a curated database of darknet activity.

The API can facilitate the automated ingestion of threat intelligence directly into an organization’s existing cybersecurity infrastructure (e.g., via SIEM or SOAR). In addition, it can be an alternative way to conduct manual scanning of the TOR network for security threats by utilizing human analysts who will manually search the TOR network while overcoming the database administrative and operational barriers that exist.

An application programming interface (API) that performs its core function, finding specific indicators of compromise (IoCs) throughout billions of hidden channels and pages. Examples include: compromised email addresses, leaked credentials, stolen session tokens, and references to an organization’s brand or executive names.

These data types are the primary commodities traded on underground marketplaces, making a thorough understanding of those platforms, their hierarchies, trust mechanisms, and longevity critical for interpreting the intelligence these APIs return.

With this automated search function, the API will automatically convert the “noise” of the dark web into actionable intelligence in real-time at a machine-level speed.

Mechanisms of Intelligence Collection

The effectiveness of a Dark Web Monitoring API depends on its data collection methods. Various techniques ensure it captures the best information from even the most obscure parts of the Internet.

Automated collection uses specialized crawlers and scrapers that mimic human behavior to bypass anti-crawling measures like CAPTCHA, membership requirements, and onion-network firewalls.

Due to the frequent instability of these website types and their location on temporary servers, crawlers on them must have the ability to persist and be able to navigate through proxies and VPNs concealing their point of origin (thereby avoiding detection by threat actors) in order to continue performing their work for as long as possible.

Automation is difficult because high-value criminal forums usually require vetting by another person or payment of a fee to gain access. Intelligence providers have developed Virtual HUMINT (Human Intelligence) methods to address these situations. These covert operatives are experienced professionals who manage your avatar in the shadowy world of organized crime.

Through reputation building and participation in underground conversations, these operatives are able to gain entry into the so-called “closed” communities. This is where planned attacks occur and zero-day vulnerabilities are exchanged.

The human-factors approach provides a degree of context to the automated tools that they do not have access to, which in turn allows the API to give you completed intelligence products versus raw data alone.

Evaluating Organizational Safety and Efficacy

Security managers are often concerned about whether the act of conducting active monitoring, as part of an objective and effective strategy, may inadvertently put their companies at risk and increase their overall exposure to risk (or profile them). In order to assess whether or not the use of dark web monitoring solutions (and other strategies) is safe, organizations should determine if the vendor providing the tools is reputable.

When utilizing reliable API vendors, organizations have less risk of misuse or misjudgment because reputable vendors provide a buffer between the company’s network and hackers. Automated scanning devices and encrypted communication protocols used by reputable vendors will ensure that the organization’s true network infrastructure will never come in contact with a malicious server.

This safeguards your internal systems from a “drive-by” malware attack or a trap set by a hacker looking for researchers. These providers also comply with strict data regulations, keeping all client intelligence secure, encrypted, and accessible only to the client.

The second big question is whether or not investing in dark web monitoring makes sense. To determine that, you need to look at how much a typical data breach today costs. In 2023, the average cost of a data breach was $4.45 million, which includes costs for forensics, lawyers’ fees, fines, and regulations, etc., but also factors in the loss of trust from customers.

| Cost Category | Impact without monitoring | Impact with dark web monitoring API |

|---|---|---|

| Breach detection time | Average dwell time of over 200 days. | Almost live detection (ranges from a few minutes to hours). |

| Ransomware mitigation | Full system encryption and massive ransom | Prevention by resetting credentials before the attack starts. |

| Regulatory fines | Maximum penalties for negligence | Reduced fines by demonstrating proactive “due diligence”. |

| Customer churn | Significant loss of trust and 63% churn | Mitigated by being the first to notify and protect users. |

Essentially, the ROI of an API for Dark Web Monitoring will work as high-yield insurance for your organisation. If your service detects one set of administrative credentials from a “stealer log” prior to the attacker using them to execute ransomware, then your service has paid for itself for multiple years.

Dark Web Monitoring for Business: Expanding the Attack Surface

The idea of a “perimeter” in 2026 is nearly gone; employees are working remotely, using their own devices to do their jobs and accessing third-party SaaS applications. Therefore, to properly conduct dark web monitoring for businesses, companies must take into consideration the “external attack surface” that extends well beyond the corporate network.

The growing prevalence of info-stealer malware is posing an ever-increasing risk for today’s businesses. It functions unlike traditional viruses, which typically cause damage to files, info-stealers (e.g., RedLine, Vidar, Raccoon). However, infostealer malware tends to focus on the extraction of everything within a user’s browser, including passwords, autofill information, and, most importantly, session cookies.

Attackers can hijack company apps using stolen session cookies from dark web logs, bypassing MFA. A business-grade monitoring API can detect such stolen logs by tracking associated domains or IP ranges.

Upon identifying a match, the system offers not only a detailed browser profile, but also host details linked to the infection. This enables the IT Department to do more than just reset the user’s password. Technicians re-image the device with a new OS and revoke all the user’s cloud sessions.

Additionally, supply chain risk management heavily relies on the use of monitoring. It has been recorded that many security breaches happen due to a third-party supplier being compromised rather than the target organisation itself. Organizations can set up monitoring services, like a Dark Web Monitoring API, to track key suppliers.

These can monitor any data leak that could expose the organisation’s data through a third-party channel, thus providing an early warning to the organisation.

The Evolution of Consumer Protection: Dark Web Monitoring Google

For many years, Google was one of the most well-known companies in this field among the general public. But, in early 2026, Google announced they would be discontinuing their Google Dark Web Report which drastically altered their landscape.

They have now clearly defined a separation between basic alert systems for consumers versus intelligence systems that provide enterprise customers with high-fidelity data on security breaches that occur in the dark web. The “Dark Web Monitoring by Google” feature lets average users search breached databases for their email addresses and phone numbers.

It can be beneficial to a user who wants to be personally aware. However, there are insufficient “actionable steps” and a lack of real-time speed that is essential to a business. Google’s decision to retire this feature is evidence of the complexity and evolving nature of today’s darknet threats. More particularly, in respect to real-time stealer logs and encrypted Telegram channels.

It also requires specialized providers with enough resources to conduct deep and continuous scanning to ensure a high degree of success. This change illustrates a significant gap in the capabilities of organizations that used consumer-based tools to achieve “checkbox” compliance.

Organizations lack visibility into real-time malicious attacks that are likely using current, fresh data through their lack of a solid enterprise-grade API to enable access to such data. Accessing this live data is critical to your organization. This is mainly because past breaches are stored in history and downloadable logs that include the hacker’s activity. This poses an imminent risk to your organization.

Technical Integration and Developer Best Practices

Integrating an API for dark web monitoring into a security framework isn’t simply about signing up for an API key. It’s about not just building an automated/resilient workflow around how you are going to bring the data stream (in this case, the API call) into your environment,but instead, how you’re going to create that integration.

Formalized Journey for Integrating with Both SIEM and SOAR

It also sets a regular integration schedule to ensure alerts are handled through a standardized process, reducing alert fatigue.

| Integration step | Technical action | Operational outcome |

|---|---|---|

| Define Objectives | Identify target assets (IP addresses, domain names, etc.) you would like monitored. | Eliminates noise and helps in identifying high value targets |

| Authentication | Configure OAuth 2.0 or mTLS with secure credential storage. | Maintains the integrity of the intelligence feed. |

| Normalization | Convert any JSON or XML data payload into normalized formats, such as (STIX/TAXII). | Permits the SIEM to join dark web information to internal logs. |

| Playbook Design | Script automated responses for specific alert types. | Reduces response time from hours to seconds. |

| Testing and Tuning | Run simulations to identify and reduce false positives. | Ensures the security team focuses on genuine threats. |

Advanced Developer Considerations

For integration developers, security is an important factor when using webhooks, some of the more common methods to send alerts in real time. Without proper security in place, attackers can spoof webhooks. Here are some of the most commonly recommended best practices:

- HMAC signatures: When sending an HTTP web request (webhook), use HMAC Signatures. The entity sending the request must sign all payloads with a secret key. This allows the recipient to check the HMAC Signature in the request header is for the correct HTTP Web Request and that the payload contains the correct data.

- Protection from replay attacks: To prevent replay attacks, the system includes a timestamp with each webhook payload. If a webhook is older than five minutes, the system rejects it.

- Rate limiting: Integrations must accommodate an extreme, sudden surge of data, such as a large breach dump, without causing an internal crash within SIEM.

- IP allowlisting: The organization should exclusively allow traffic coming from the specific ip ranges that an API provider uses.

Compliance, Regulation, and the Law

As far as 2026’s regulatory landscape goes, there is now clearly a tie between Dark Web Monitoring APIs and a business’s compliance to laws. The laws include GDPR, HIPAA, and PCI-DSS. All these laws require firms to be proactive regarding protecting their data rather than being reactive in how they react to any type of violation.

GDPR and the “Proactive” Requirement

Under the General Data Protection Regulation (GDPR), organizations must implement appropriate technical measures to protect personal data.

The GDPR requires that Personal Data Breaches will be reported to the supervisory authority within forty eight hours of discovery, as required by Article 33. An organization could incur a significant fine if it does not detect an information leak in a timely manner.

Without dark web monitoring, this could take several months after the occurrence of the breach, leading to potentially multi-million dollar damages from failure-to-report. The ability of an organization to utilize an API to detect a leak early enables the organization to immediately activate its response plan. Doing so immediately after the detection of the leak possibly reduces the level of regulatory damages incurred.

HIPAA and Healthcare Resilience

The healthcare industry has a legal obligation to protect individuals’ Protected Health Information (PHI), but the protection of PHI is also for the overall safety and security of the patient.

Ransomware attacks on hospitals that have previously had their medical credentials stolen are examples of how often this occurs. Hospitals must now keep track of the “environment” at all times. According to the General Data Protection Regulation (GDPR), Organizations are required to use “appropriate technical measures” to safeguard Personal Data.

PHI credentials remain some of the most sought-after targets for attackers. It also allows them to obtain patient data by exfiltration.

PCI-DSS 4.0 and Beyond

The Payment Card Industry Data Security Standard (PCI-DSS) has long required organizations to monitor their networks. The latest versions of the PCI-DSS have placed greater emphasis on securing the entire “cardholder data environment” and increased the importance of monitoring these environments.

By enabling an early warning for “carding” activities. These activities consist of using stolen credit card numbers to test and then sell to other criminals. Dark web monitoring lets financial institutions deactivate compromised cards before fraudsters can use them.

Case Studies: When Monitoring Saved the Day

Several real-world incidents show the value of Dark Web Monitoring API capabilities, where early detection of data loss prevented major disasters.

The Coinbase Response (May 2025)

In 2025, attackers bribed an external support team that served Coinbase customers to steal user data. They then attempted to extort Coinbase out of $20 million worth of ransom for user data.

However, Coinbase was able to identify the breach very quickly. This was possible because Coinbase utilized advanced external risk management measures and had checks in place to monitor for illegal sharing of its data.

Brian Armstrong, the CEO, declined payment of ransom, cooperated with Law Enforcement by collecting the information, and terminatedthe employees involved. He also offered a reward to people who could help identify those responsible.

The incident cost the company millions in remediation. However, the proactive approach of the company ultimately saved it from a multi-billion-dollar collapse of its reputation.

Lessons from Target and Home Depot

Attackers breached Target and Home Depot in the mid-2010s by stealing vendor access credentials. Although both companies detected unusual activity, their teams overlooked the alerts due to the organizations’ size. Today, companies prevent such failures by using priority scoring for security alerts.

For example, there can be an alert indicating that something has appeared in a highly trusted criminal forum. This will generally have a much higher (more priority) score associated with it than an alert indicating that something has appeared on a low-trust or public paste site. This process ensures teams address the highest-priority threats immediately.

Future Plans for 2026 Include More Use of AI

The future use of Artificial Intelligence (AI) by hackers and defenders on the dark web is rapidly growing in scope. Cybercriminals are leveraging Generative AI tools to develop polymorphic viruses. These viruses automatically modify their structural makeup so that they can evade detection based on known patterns.

In addition, hackers are also using AI to develop AI-generated audio and video content to carry out realistic corporate kidnapping scams or fake CEO scams. To combat this, Dark web monitoring APIs are leveraging the use of advanced machine learning models. By using NLP to analyze thousands of forum postings per day, these models can recognize “malicious intent” even if the threat actors use slang or coded terms.

AI classifiers have the ability to predict which “chatter” will result in an actual attack by examining historic trends in the behavior patterns of threat actors. Moreover, cybercriminals who don’t have much technical background are now able to produce highly sophisticated and credible phishing emails and exploit scripts. This is due to the increase in the “Criminal LLMs,” i.e., un-moderated/filtered versions of large language models such as ChatGPT.

The only solution for this “democratization of cybercrime” is a similar democratization of defense via the provision of businesses of all sizes with high-quality, automated intelligence through an API.

Conclusion

The shift from manual security audits to automated threat intelligence marks a turning point in modern cybersecurity. As dark web criminal networks operate like full-scale businesses, a Dark Web Monitoring API enables organizations to detect stolen credentials and session cookies before attackers can exploit them.

Early detection can prevent costly ransomware incidents and regulatory fines, making dark web monitoring a clear risk-reduction strategy. In 2026’s AI-driven threat landscape, real-time, programmatic monitoring is no longer optional, it’s a security necessity and a competitive advantage.

This assessment is reinforced by expert analyses tracking the acceleration of cybercrime, which consistently identify the weaponization of AI and the proliferation of stealer malware as defining trends that demand a proactive, intelligence-led defense posture.

FAQs

Dark web monitoring APIs are software tools that automatically scan hidden networks such as Tor, I2P, and encrypted forums for stolen credentials, leaked data, and brand mentions. Unlike manual searches, they deliver real-time alerts directly to an organization’s security systems, enabling faster response to emerging threats.

Dark web APIs use custom crawlers and scrapers to navigate underground forums and illicit marketplaces while bypassing anti-crawler defenses like CAPTCHA. They then apply AI and natural language processing (NLP) to analyze raw data, identify high-priority threats, and deliver results in structured formats such as JSON or XML.

In general, passive or limited to monitoring publicly available information within social networks is legal. Reputable API providers maintain compliance with respect to the privacy laws under which they are providing services as well as acting as “safe brokerage” between your organization and criminal actors.

Using a professional service is highly secure, as reputable providers use automated and protected investigative tools. Your internal company network does not have to ever communicate directly with the criminal dark web servers.

Indeed, current enterprise-quality APIs specifically track “stealer logs,” which hold the most up-to-date credentials and browser session cookies taken from malware infection. By identifying these logs, security teams can reset user passwords and revoke active sessions before an attacker has an opportunity to act.

Any industry with a digital presence faces the risk of data breaches, but sectors with high-value data and strict regulatory exposure benefit the most from dark web monitoring APIs. Banking, healthcare, retail, and government organizations face the greatest financial and compliance risks under regulations like GDPR and HIPAA, making early detection especially critical.